Myanmar, Genocide and Free Basics Facebook

In Myanmar, Facebook has also been used to spread misinformation and propaganda that fueled violence on the brink .. genocide against the Rohingya, an ethnic minority. The pain of low-end phones stands particularly stark now that the company is grappling with the violence in Myanmar, which coincided with a dramatic rise in Facebook users — from 2 million in 2014 to 30 million today. The rise is, in part, attributable to Free Basics.

So while we’re on the subject of totalitarian corporate social media, i.e. Facebook, this article from Abigail Higgins, a journalist dedicated to covering issues in East Africa has a report on the role of Facebook in violence and authoritarian regimes in the developing world. This time, however, the issue touches “Buddhism” directly: Facebook’s low-bandwidth, low-budget Facebook Basics web service played a key role in amping up ethnic-extremism in Myanmar, playing a key role in the genocide of the Rohingya.

Facebook Doesn’t Need To Engineer World Peace, But It Doesn’t Have To Fuel Violence

In the U.S. and the U.K., companies like Facebook and Cambridge Analytica might be complicit in voter manipulation. In the global south, are they causing ethnic conflict?

Data is “the electricity of our new economy,” whistleblower Christopher Wyliesaid last week when he testified that his former employer Cambridge Analytica had indeed exploited personal data to affect Brexit, Britain’s exit of the European Union.

“We enjoy the benefits of electricity, despite the fact that it can literally kill you,” he said.

Americans have since learned that the the data of 87 million people, mostly Americans, was shared with Cambridge Analytica, which may have been used to sway Donald Trump’s election. Wylie put the original number at 50 million. Then, earlier this week, Facebook announced that it was likely that the majority of its over 2 billion users have had their data scraped at some point.

Between Brexit, Trump, and increasingly damning admissions from Facebook, the world is waking up to the immense power, and threat, of data. Facebook is experiencing a backlash as a result — the company has lost almost $100 billion in recent weeks.

But the threat of data, just as with electricity, is not spread evenly across the globe.

In the U.S. and the U.K., companies like Facebook and Cambridge Analytica might be complicit in voter manipulation; in other parts of the world, they may be tools of mass violence.

This month, an undercover investigation recorded Cambridge Analytica’s senior executives gloating about the heavy-handed role they played in getting Kenyan President Uhuru Kenyatta elected last year, after a contentious election that resulted in almost 100 deaths. It was also an election in which ethnically fueled hate speech and propaganda filled Facebook News Feeds across the nation. In Myanmar, Facebook has also been used to spread misinformation and propaganda that fueled violence on the brink of genocide against the Rohingya, an ethnic minority. Likewise in South Sudan, where a brutal civil war has killed tens of thousands and created two and half million refugees, fake news and hate speech on Facebook has helped push the country toward genocide.

Could these companies actually be responsible for mass violence? And if so, with whom should the blame lie — the people that spread the hoaxes, the platform that enabled their spread, or someone in between?

It’s a problem Mark Zuckerberg likely did not anticipate when he set about getting the world online, “one of the most important things we all do in our lifetimes,” he wrote in 2014. Facebook COO Sheryl Sandberg boosted his utopian vision: “If the first decade was starting the process of connecting the world, the next decade is helping connect the people who are not yet connected and watching what happens.”

The uptake of Facebook in the global south has been rapid; in fact, it has constitutedthe majority of Facebook’s growth in recent years. This has a lot to do with Free Basics, a stripped-down version of the internet for the unconnected poor, comprising a series of “free” curated apps. Its altruistic mission is that connectivity is a human right and Free Basics is a cheap, efficient way to fulfill that right. The more self-serving motivation is that, with most of North America and Europe saturated, the only way for Facebook to get more users was to get more of the world online.

A 2014 Time Magazine article chronicles the beginnings of the Free Basics experiment, in which Facebook built a lab in their Silicon Valley offices to mimic the suboptimal computing conditions of the developing world, including poor network connections.

“As Zuckerberg himself puts it, when you work at a place like Facebook, ‘it’s easy to not have empathy for what the experience is for the majority of people in the world,’” wrote journalist Lev Grossman. “To avoid any possible empathy shortfall, Facebook is engineering empathy artificially.” Grossman added that Javier Olivan, Facebook’s head of growth, forced a lot of his employees to use the low-end phones ubiquitous in the developing world because “you need to feel the pain.”

The pain of low-end phones stands particularly stark now that the company is grappling with the violence in Myanmar, which coincided with a dramatic rise in Facebook users — from 2 million in 2014 to 30 million today. The rise is, in part, attributable to Free Basics.

It’s not the only concerning part of Free Basics: India banned it because they believed it violated net neutrality, essentially creating a lesser version of the internet for the poor. Kenyan political analyst Nanjala Nyabola, the author of a forthcoming book about the internet and Kenyan politics, pointed out that the opportunities it created for surveillance and censorship were “an African dictator’s dream.”

But, when it comes to violence, can Facebook really be held responsible for what its platform is used for?

Hate and violence predate the internet, of course. The web just gives hate a chance to spread more quickly. Fake news, like Hillary Clinton running a pizza restaurant child sex ring, spread like wildfire through the Facebook accounts of Trump supporters — and similarly, doctored photos and anti-Rohingya propaganda spread through Myanmar’s Free Basics. Rumors and hoaxes travel efficiently online— in part through companies like Cambridge Analytica.

Cambridge Analytica and Facebook are two different beasts. One is a company accused of deliberately meddling in foreign elections — in fact, Wylie said that his former boss “enjoyed the colonial challenge of manipulating the affairs of less developed countries.” The other is a company that, through some combination of ambition and hubris, now has control over the personal information of a quarter of the world’s population.

And it’s powerful data: One study found that Facebook’s algorithm needed access to just 10 likes to know you better than a work colleague, 70 to know you better than a roommate, 150 better than a parent or sibling, and 300 better than a spouse. This remarkable insight is what Cambridge Analytica is accused of using to manipulate voters during the Brexit vote and Trump’s election — and Facebook is accused of letting them use that data.

But is that same data being used to convince people to kill each other?

The answer is probably not — at least, not yet.

In Kenya, there’s little evidence that Cambridge Analytica had much of an effect on the outcome of the election, despite their boasts otherwise.

“It’s a company that everyone loves to hate, for good reasons, and I think that they are involved in some dirty tricks and some very negative messaging which is clearly completely irresponsible, especially in places like Kenya with a history of election violence,” says Gabrielle Lynch, a professor of comparative politics at the University of Warwick. “But to suggest that they basically won the election in Kenya is to overstate the case.”

But social media use has increased in each of Kenya’s past three elections, says Lynch, which could be a concerning indicator of its role in future elections.

And Facebook doesn’t seem to be taking the accusations particularly seriously, certainly not as seriously as interference in the U.S. election.

“Facebook now has a political function they didn’t set out to perform and they don’t seem to have a sense of responsibility about the political position they’re playing in the developing world,” says Nyabola.

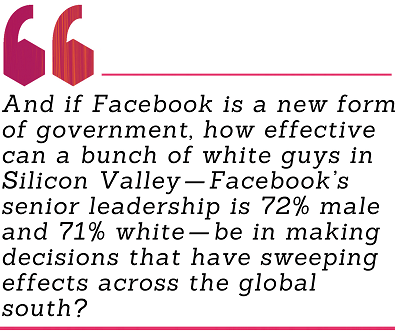

Ezra Klein interviewed Mark Zuckerberg this week and asked him about his assertions that Facebook, with such a massive user base, now acts more like a government than a traditional company. “When Facebook gets it wrong, the consequences are on the scale of when a government gets it wrong. Elections can lose legitimacy, or ethnic violence can break out,” says Klein. So what is Facebook’s responsibility? And if Facebook is a new form of government, how effective can a bunch of white guys in Silicon Valley — Facebook’s senior leadership is 72% male and 71% white — be in making decisions that have sweeping effects across the global south?

“They haven’t really thought much about what that means and they haven’t really thought through how their platform intersect with politics outside the west,” said Nyabola. “They haven’t really given much attention to people outside the west.”

When asked about the violence in Myanmar, in the same interview with Klein, Zuckerberg’s answer was fairly glib, considering. “One of the things I think we need to get better at as we grow is becoming a more global company. We have offices all over the world, so we’re already quite global. But our headquarters is here in California and the vast majority of our community is not even in the U.S.”

He added that Facebook had been effective in quashing hate speech in Myanmar, an answer that quickly drew anger from civil society groups within the country, who came together to write an open letter in response.

“This case exemplifies the very opposite of effective moderation,” they wrote, “It reveals an over-reliance on third parties, a lack of a proper mechanism for emergency escalation, a reticence to engage local stakeholders around systemic solutions and a lack of transparency.”

It’s an interaction that suggests that, despite the labs, Facebook still doesn’t have empathy for the experiences of the majority of people in the world — and that empathy shortfall might have something to do with the guy at the top.

Please subscribe to our weekly newsletter, and follow us on Facebookand Twitter. BRIGHT Magazine is made possible by funding from the Bill & Melinda Gates Foundation. BRIGHT retains editorial independence.

Pingback: Myanmar, Genocide and Free Basics Facebook — Engage! | Advayavada Buddhism

Pingback: Things Mark Zuckerberg didn’t talk about in his anniversary post- Technology News, Firstpost – Free Internet